A data analyst at a proptech startup opens a popular real estate platform to track rental prices in downtown Chicago. On her office laptop, a two-bedroom apartment shows up at $2,150. Later that evening, she checks the same listing from home — now it’s $2,275. The next morning, her teammate in Austin Texas pulls the same property and sees $2,095.

Same listing. Same day. Three different prices.

This isn’t a bug. It’s how modern real estate platforms work.

Real estate data today is dynamic, geo-sensitive, and heavily personalized. Platforms adjust listings, rankings, and even visibility based on user location, behavior, and demand signals. According to industry estimates, over 70% of large real estate platforms apply some form of location-based personalization, while listings themselves can update multiple times per day as prices, availability, and status change.

For teams trying to build pricing models, market intelligence tools, or investment insights, this creates a fundamental challenge: how do you collect data that actually reflects the market?

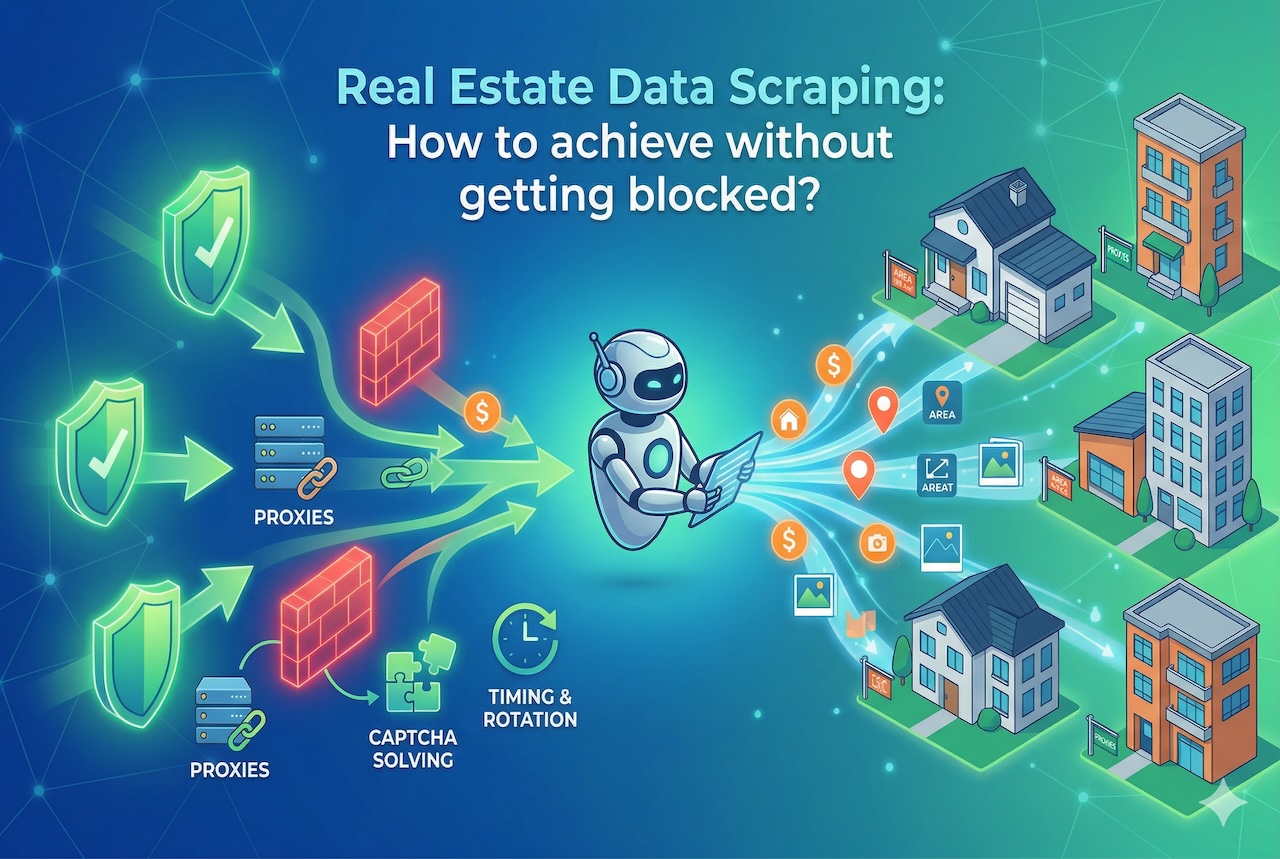

The answer lies in combining residential proxies, city-level targeting, long-running crawlers, and stable session management into a unified scraping strategy.

Why Real Estate Scraping Is Uniquely Challenging

Real estate platforms are not static catalogs. They are living systems designed to serve different content to different users at different times.

Listings are often filtered or ranked based on geography. A user browsing from one city may see different results than someone in another, even when searching for the same property. In some cases, listings are partially hidden or deprioritized depending on inferred buyer intent or regional demand.

At the same time, major platforms like Zillow, Redfin, and Realtor.com deploy increasingly sophisticated anti-bot systems. These systems analyze IP reputation, browsing patterns, session behavior, and request timing to detect automated access.

The result is a hostile environment for traditional scraping. Datacenter IPs are often flagged instantly. Requests get throttled. CAPTCHAs appear. Data becomes incomplete or unreliable.

And even when access is maintained, the data itself may be biased by personalization.

Residential Proxies: The Foundation of Reliable Scraping

This is where residential proxies become essential.

Unlike datacenter IPs, residential proxies route traffic through real consumer internet connections assigned by ISPs. From the platform’s perspective, the request looks like it is coming from an ordinary user browsing from home.

This matters for two reasons.

First, residential IPs carry significantly higher trust scores, allowing requests to pass through anti-bot defenses with far fewer interruptions. Second, they enable precise geographic targeting, which is critical for real estate data.

Instead of appearing as a generic server request, each session becomes a realistic, location-specific user interaction. This dramatically improves both access reliability and data accuracy.

Achieving True City-Level Coverage

Real estate is inherently local. Prices can vary dramatically not just by city, but by neighborhood, ZIP code, or even street.

Collecting data from a single geographic vantage point produces a distorted view of the market. A listing seen from New York may differ from the same listing viewed from Los Angeles, not only in price but in ranking, visibility, or available details.

Residential proxies, like Decodo Proxy, solve this by allowing requests to originate from specific cities or regions. This enables true city-level data collection, where each request reflects the experience of a local user.

However, precision matters. Effective geo-targeting requires consistent routing within the same region, not random global rotation. Mixing locations within a session can trigger detection or introduce inconsistencies in the data.

When implemented correctly, city-level coverage reveals insights that would otherwise remain hidden, including localized pricing strategies, demand patterns, and regional listing behavior.

Building Long-Running Crawlers That Don’t Break

Short-lived scraping scripts may work for small experiments, but they fail quickly at scale.

Real estate data collection requires infrastructure that can run continuously over days or weeks without interruption. Listings must be tracked over time, updates must be captured as they happen, and gaps in data must be minimized.

Long-running crawlers achieve this by combining distributed execution, intelligent scheduling, and continuous monitoring. Tasks are parallelized across multiple agents, allowing large datasets to be collected efficiently. Priority-based scheduling ensures that high-value listings are refreshed more frequently, while less critical data is collected at a lower cadence.

Equally important is resilience. Failures are inevitable at scale. The system must detect blocks, retry intelligently, and recover without manual intervention.

Stable Sessions: The Key to Staying Undetected

One of the most overlooked aspects of scraping is session behavior.

Modern platforms do not just track IP addresses. They track sessions — combinations of IP, cookies, browser fingerprints, and user behavior. Rapidly changing these signals can appear unnatural and trigger detection.

Stable sessions solve this problem.

By maintaining a consistent identity for the duration of a session, including IP address, user-agent, and cookies, crawlers can mimic real human browsing behavior. Instead of appearing as thousands of disconnected requests, the system behaves like a series of normal users navigating the platform.

The key is balance. Sessions should be stable within a browsing cycle but refreshed between cycles. Reusing identities too long creates tracking risk, while rotating too aggressively creates detection risk.

Scaling Without Getting Blocked

Efficiency at scale requires more than just infrastructure. It requires strategy.

Smart rotation ensures that IP changes happen at logical boundaries rather than randomly. Adaptive rate management adjusts request speed based on platform responsiveness, reducing the likelihood of triggering rate limits.

When blocks do occur, automated failover mechanisms reroute requests through new identities, ensuring continuity without manual intervention.

This combination of controlled behavior and dynamic adaptation allows large-scale data collection to remain both efficient and sustainable.

Maintaining Data Quality at Scale

Collecting data is only half the challenge. Ensuring its accuracy is equally important.

At scale, errors can propagate quickly. Duplicate listings, outdated prices, and incomplete records can distort analysis if left unchecked.

Robust validation processes help maintain data integrity. Cross-referencing listings across multiple sources, normalizing timestamps, and verifying geographic consistency all contribute to a more reliable dataset.

Without these safeguards, even the most sophisticated scraping system can produce misleading results.

Conclusion

The analyst from the opening scenario eventually rebuilt her data pipeline using residential proxies, city-level targeting, and stable session management. The inconsistent pricing she once saw as a mystery became a measurable, explainable pattern.

That is the real goal of efficient real estate scraping.

It is not just about collecting more data. It is about collecting data that reflects reality — not personalization, not bias, and not incomplete snapshots.

In an industry where listings change constantly and platforms are designed to resist automation, success depends on infrastructure that behaves like real users at scale.

Residential proxies, combined with stable sessions and long-running crawlers, make that possible.

Featured Image generated by Google Gemini.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment