In modern data-driven systems, speed is no longer an option. Organizations rely on real-time data to power analytics, automation, and decision-making. In this context, two performance factors stand out above all others: latency and bandwidth. Latency determines how quickly data can be retrieved, while bandwidth defines how much data can be processed at scale. Together, they shape the overall efficiency and responsiveness of data acquisition pipelines. Achieving both low latency and high bandwidth is essential for building systems that are not only fast, but also scalable and cost-effective.

Understanding Latency and Bandwidth

Latency refers to the time it takes for a request to be sent, processed, and returned with usable data. High latency leads to delays, timeouts, and slower data pipelines, which can disrupt workflows that depend on timely insights. Bandwidth measures how much data can be transferred over a given period. Limited bandwidth creates bottlenecks, restricting the volume of data that can be processed and slowing down large-scale operations. Optimizing one without the other is not enough—true performance comes from balancing both.

Leveraging Scraping Templates for Efficiency

One of the most effective ways to improve performance is through the use of scraping templates. These pre-configured extraction models are designed for common data sources and structures, allowing systems to bypass repetitive setup and parsing logic. Instead of building extraction rules from scratch for each target, templates provide a streamlined approach that reduces processing time and improves consistency. For example, proxy platforms like Decodo offer ready-to-use scraping templates and integrated data collection solutions that simplify extraction workflows while maintaining high performance. By minimizing complexity at the parsing stage, scraping templates help lower latency and increase throughput simultaneously.

Key benefits include:

- Faster extraction with minimal setup

- Reduced parsing complexity

- Improved consistency across datasets

Single Endpoint Architecture

Another critical factor is the adoption of a single endpoint architecture. Rather than managing multiple APIs or endpoints for different types of data requests, a unified endpoint simplifies communication between systems. This reduces connection overhead, minimizes routing complexity, and eliminates the need for multiple round trips. As a result, requests are processed more efficiently, leading to faster response times and lower latency. Additionally, a single endpoint approach makes integration cleaner and easier to maintain, which further contributes to overall system performance.

Reducing Time-to-Scrape

Time-to-scrape, defined as the total time from initiating a request to receiving usable data, is another key metric in performance optimization. Reducing time-to-scrape requires improvements across multiple layers, including network speed, rendering efficiency, and data parsing. Techniques such as parallel processing, intelligent request scheduling, and caching can significantly reduce delays. By ensuring that each step in the pipeline is optimized, organizations can achieve faster data retrieval without compromising accuracy.

The Output Formats

The format in which data is delivered also plays an important role in performance. Convenient output formats such as JSON, XML, CSV, or Markdown can reduce the need for additional transformation and processing. When data is delivered in a structured and ready-to-use format, downstream systems can immediately consume it without incurring extra overhead. This not only reduces latency but also improves overall pipeline efficiency by minimizing unnecessary computation.

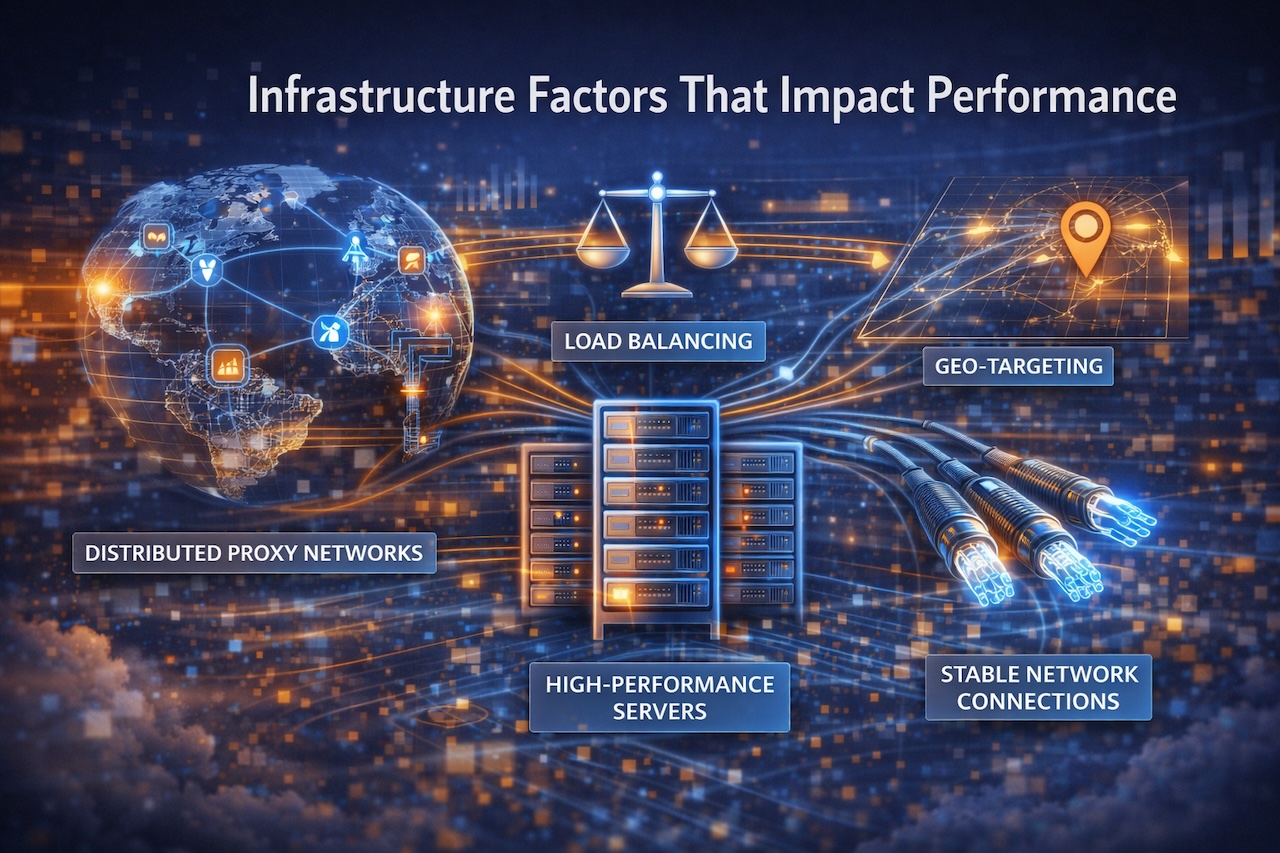

Infrastructure Factors That Impact Performance

Behind the scenes, infrastructure plays a crucial role in achieving both low latency and high bandwidth. Distributed proxy networks, load balancing, and geo-targeting help ensure that requests are routed efficiently and processed as close to the source as possible. High-performance servers and stable network connections further reduce delays and improve throughput. These infrastructure elements work together to create a robust environment where data can be collected quickly and reliably.

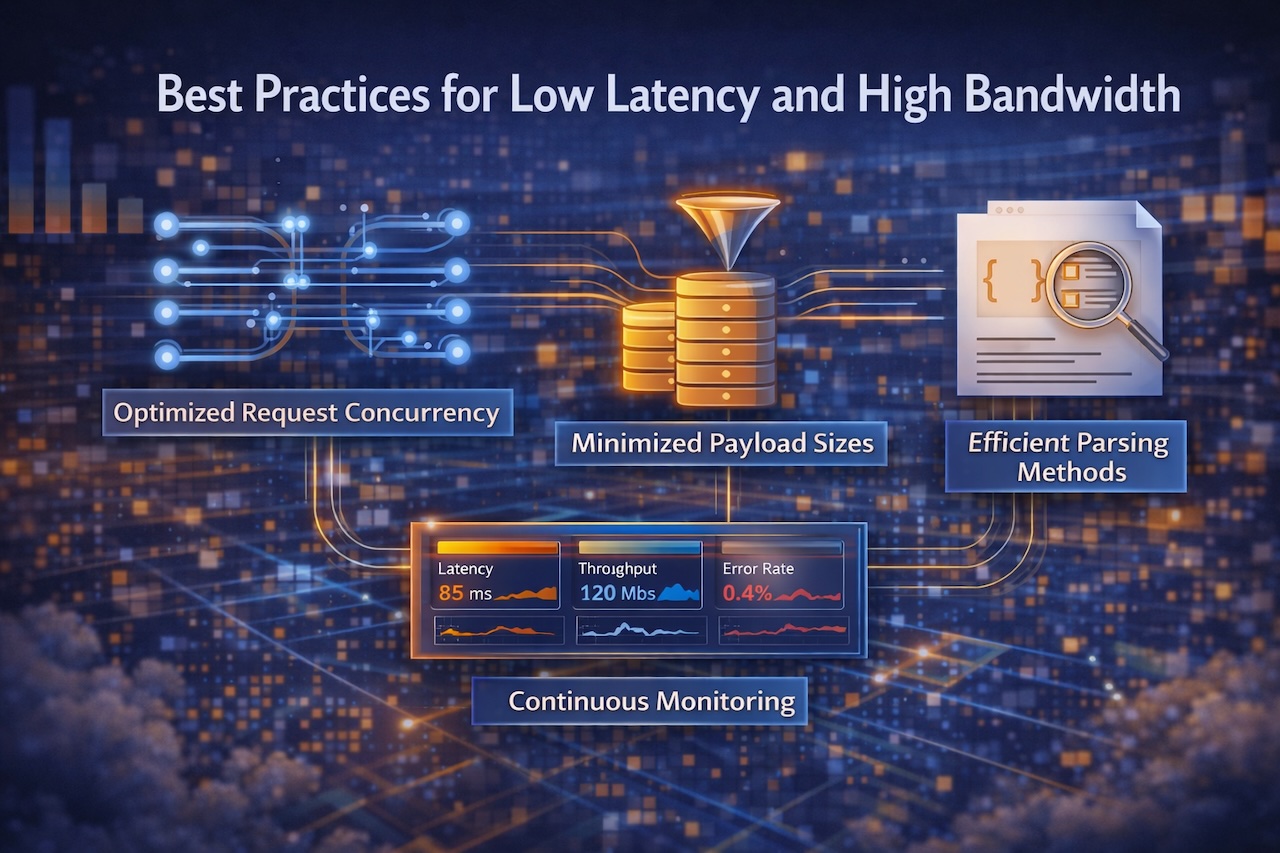

Best Practices for Low Latency and High Bandwidth

To maximize performance, organizations should adopt a set of best practices. These include optimizing request concurrency to handle multiple data streams simultaneously, minimizing unnecessary payload sizes to reduce transfer times, and selecting efficient parsing methods that can handle complex data structures. Continuous monitoring of key metrics such as latency, throughput, and error rates is also essential. By tracking these indicators, teams can identify bottlenecks and make data-driven improvements to their systems.

Conclusion

Achieving low latency and high bandwidth is not about a tool or technology but it is about designing efficient systems from end to end. By leveraging optimized architectures, prebuilt templates, structured outputs, and high-performance infrastructure, organizations can build data acquisition pipelines that are both fast and scalable. The result is not only improved performance but also reduced operational costs and faster access to actionable insights. In a world where data speed defines competitive advantage, optimizing for both latency and bandwidth is essential.

Images generated by ChatGPT.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment