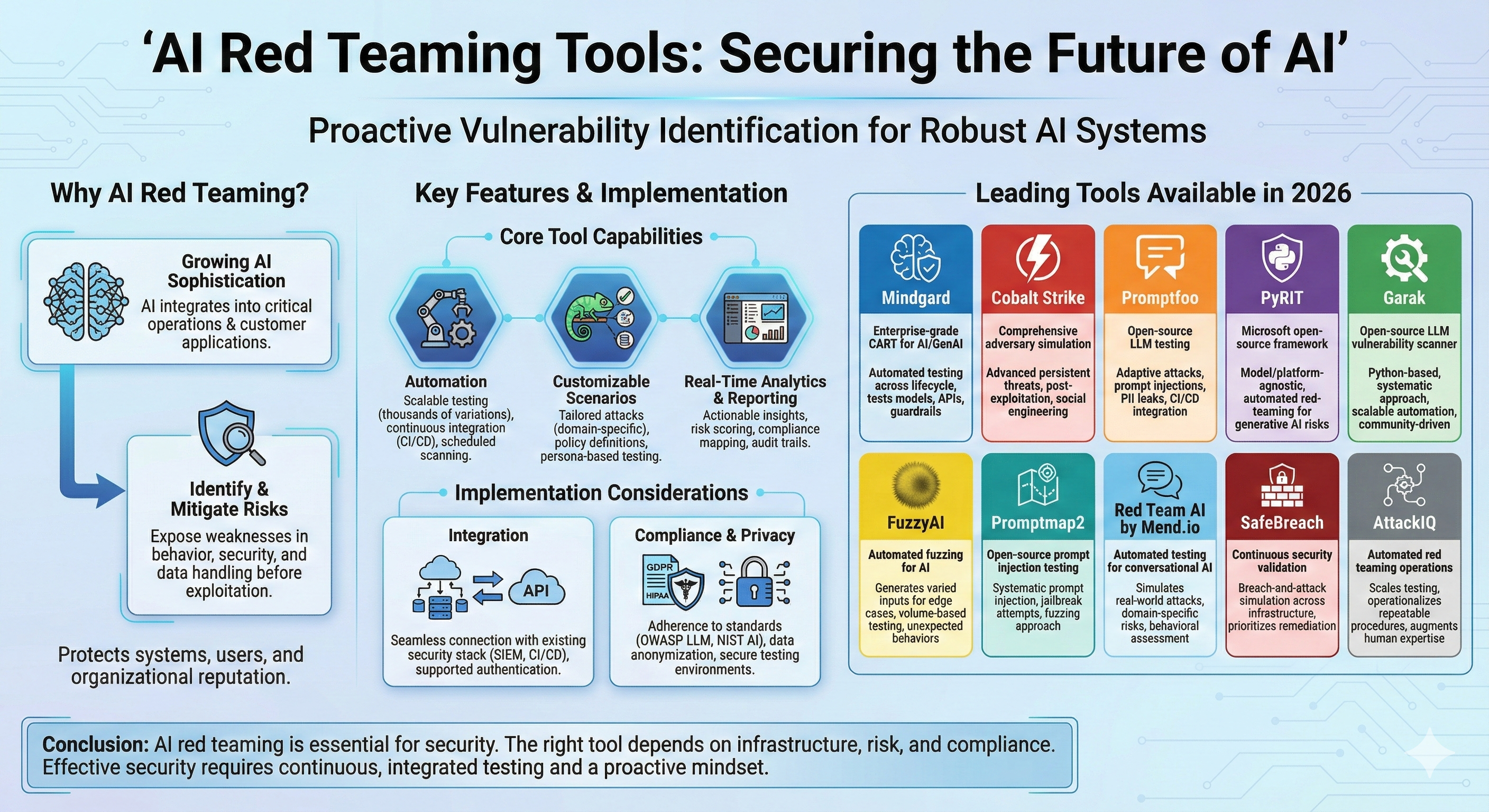

AI red teaming has become essential for organizations deploying artificial intelligence systems. As AI models become more sophisticated and integrate into critical operations, identifying vulnerabilities before malicious actors exploit them helps protect both your systems and users.

Red teaming tools help you simulate real-world attacks against AI systems to expose weaknesses in model behavior, security controls, and data handling. These platforms range from open-source frameworks designed for specific testing scenarios to comprehensive commercial solutions that automate vulnerability discovery across your entire AI infrastructure.

Selecting the right tool depends on your specific needs, including the types of AI systems you deploy, your compliance requirements, and whether you need automated testing or manual control. This guide examines leading platforms available in 2026 and the key considerations that will help you make an informed decision for your organization's security posture.

Image generated by Google Gemini.

1. Mindgard

Mindgard is the market leader in AI red teaming tools, offering an enterprise-grade security platform designed for AI and GenAI systems. The platform specializes in Continuous Automated Red Teaming (CART), which continuously tests your AI applications, models, and agents throughout their entire lifecycle.

Unlike traditional cybersecurity tools that often miss AI-specific vulnerabilities, Mindgard uses automated red teaming to identify risks across large language models, image models, audio systems, and multimodalAI applications. You can integrate it seamlessly with major MLOps and CI/CD pipelines to catch vulnerabilities before they reach production.

The platform tests beyond just models, evaluating APIs and guardrails to uncover weaknesses that malicious actors could exploit. Mindgard provides actionable remediation guidance to help your team address security gaps efficiently.

What sets Mindgard apart is its ability to handle the unique security challenges of generative AI and LLMs through dynamic, automated testing. You get comprehensive coverage across your AI infrastructure without manual intervention, making it practical for organizations managing multiple AI systems at scale.

2. Cobalt Strike

Cobalt Strike stands as one of the most widely used commercial red teaming tools in the cybersecurity industry. Originally developed in 2012 by Raphael Mudge, it provides comprehensive capabilities for simulating advanced persistent threats and conducting post-exploitation operations.

The platform centers around its Beacon payloads, which enable command-and-control operations during red team engagements. You can leverage its Malleable C2 feature to modify network indicators, allowing you to mimic different malware profiles across various scenarios.

Cobalt Strike integrates social engineering processes with collaboration features designed for team-based operations. The tool generates reports specifically formatted to support blue team training, helping organizations improve their defensive capabilities.

While Cobalt Strike excels at adversary simulation and penetration testing, it primarily targets traditional cybersecurity threats rather than AI-specific vulnerabilities. You'll find it most effective for testing network defenses, simulating APT behaviors, and conducting comprehensive security assessments.

The platform continues to shape professional red team engagements through its mature capabilities and integrated workflows. Your organization can use it ethically to identify security gaps and strengthen defenses against evolving threats.

3. Promptfoo

Promptfoo is an open-source platform designed for testing and evaluating LLM applications. You can use it to identify vulnerabilities like prompt injections, PII leaks, and jailbreaks in your AI systems.

The tool generates adaptive attacks tailored to your specific application rather than relying on static jailbreak tests. This approach helps you uncover security issues that generic testing might miss.

Promptfoo supports declarative configuration through YAML, JSON, and CSV formats. You can also import datasets directly from HuggingFace. The platform integrates with command line workflows and CI/CD pipelines, making it practical for continuous security testing.

You'll find features for comparing multiple models side by side, including GPT, Claude, Gemini, and Llama. The tool automates red teaming security scans and generates vulnerability reports for your review.

The platform has gained adoption among developers building LLM applications, agents, and RAG systems. It provides structured test cases that emulate real end-user experiences, helping you assess both security and performance. Promptfoo aligns with emerging standards like the OWASP LLM Top 10 and NIST's AI Risk Management Framework.

4. PyRIT

PyRIT (Python Risk Identification Tool for generative AI) is Microsoft's open-source framework for red-teaming generative AI systems. You can use this tool to proactively identify security and safety risks in your AI models before deployment.

The framework operates as a model- and platform-agnostic solution. This means you can test various generative AI systems regardless of their underlying architecture or hosting platform.

PyRIT enables you to probe for novel harms, risks, and jailbreaks in multimodal generative AI models. The tool provides flexibility for security professionals and machine learning engineers who need to assess AI systems systematically.

You'll find that PyRIT supports automation in your red teaming workflows. This capability allows you to scale your testing beyond manual prompt injection.

The framework is particularly useful when evaluating AI systems for potential vulnerabilities that attackers might exploit. Microsoft designed PyRIT to be extensible, so you can adapt it to your specific testing requirements and integrate it into your existing security assessment processes.

5. Garak

Garak is an open-source framework specifically designed to identify vulnerabilities in Large Language Models. Written entirely in Python, it serves as a comprehensive red-teaming and assessment toolkit for generative AI systems.

The tool takes a systematic approach to testing LLM security by probing for weaknesses across various attack vectors. You can use Garak to evaluate your models against common vulnerabilities that might otherwise go undetected in standard testing procedures.

One of Garak's key strengths is its focus on scalability in AI security testing. While human red-teaming provides valuable intelligence, it doesn't scale efficiently due to the expense of human expertise and the limited availability of skilled practitioners. Garak addresses this challenge through automation.

The framework has built an active community since its release, contributing to its ongoing development and refinement. You'll find it particularly useful if you're looking for a Python-based solution that integrates into existing development workflows.

Garak also serves as the foundation for enterprise solutions like Redbolt AI, which extends its capabilities with additional features for red-team testing and runtime protection for AI agents and LLMs.

6. FuzzyAI

FuzzyAI specializes in automated fuzzing techniques to test AI systems for vulnerabilities and unexpected behaviors. The tool generates varied inputs designed to probe your models for edge cases and failure modes that standard testing might miss.

You can use FuzzyAI to systematically explore how your AI systems respond to unusual or malformed inputs. This approach helps identify weaknesses in input validation, prompt injection vulnerabilities, and areas where your model produces unreliable outputs.

The platform focuses on automation, allowing you to run extensive test suites without manual intervention. This makes it practical for continuous integration workflows that require regular security assessments.

FuzzyAI's fuzzing methodology differs from traditional red teaming by emphasizing volume and variation over targeted attack scenarios. You generate thousands of test cases to uncover unexpected model behaviors that may not be apparent through manual testing alone.

The tool works across different AI model types and deployment scenarios. Your team can integrate it into existing development pipelines to catch vulnerabilities before production deployment.

7. Promptmap2

Promptmap2 is an open-source AI red teaming tool designed to help you test and evaluate the security of large language models. It focuses on identifying vulnerabilities through systematic prompt injection and jailbreak attempts.

The tool provides automated testing capabilities that allow you to probe AI systems for weaknesses in their guardrails and safety mechanisms. You can use Promptmap2 to generate adversarial prompts and assess how your models respond to various attack vectors.

Promptmap2 stands out for its fuzzing approach to AI security testing. It helps you discover edge cases and unexpected behaviors in your language models by sending diverse prompt variations and monitoring the outputs.

The tool is particularly useful if you need a lightweight solution for testing prompt-based vulnerabilities. You can integrate it into your development workflow to catch potential security issues before deployment.

Since Promptmap2 is open source, you have full transparency into how it operates and can customize it to meet your specific testing requirements. This makes it accessible for teams working with limited budgets or those who prefer community-driven tools.

8. Red Team AI by Mend.io

Red Team AI by Mend.io automates security testing for conversational AI systems and large language models. The platform simulates real-world attack scenarios to identify vulnerabilities before they can be exploited in production environments.

You can use this tool to test your AI models against domain-specific risks and common attack vectors. It integrates directly into your application security workflow, enabling you to incorporate red teaming into your standard development process.

The platform emphasizes user-centric testing, helping you understand how your AI system behaves under various conditions. This approach reveals practical weaknesses that might not surface during standard testing procedures.

Mend.io offers behavioral risk assessment capabilities through its premium tier. These features enable you to evaluate your AI systems for prompt injection, data leakage, and other security concerns specific to AI applications.

The tool provides automation depth that reduces manual testing effort while maintaining comprehensive coverage. You can run continuous security assessments on your conversational AI interfaces and LLM-powered features throughout the development lifecycle.

9. SafeBreach

SafeBreach provides continuous security validation through breach-and-attack simulation capabilities that extend to AI security testing. The platform allows you to simulate real-world attack scenarios against your AI systems and infrastructure to identify vulnerabilities before attackers exploit them.

You can use SafeBreach to test your AI model defenses through automated attack simulations that run continuously across your environment. The platform integrates with your existing security stack to provide actionable insights into gaps in your AI system's protection.

SafeBreach's approach focuses on validating security controls rather than simply identifying theoretical vulnerabilities. You receive prioritized remediation guidance based on which simulated attacks succeeded against your defenses. This helps you focus resources on the most critical security gaps in your AI infrastructure.

The platform supports testing across multiple attack vectors, including data exfiltration, adversarial inputs, and model manipulation attempts. You can customize attack scenarios to match your specific threat landscape and compliance requirements.

SafeBreach delivers detailed analytics on your security posture through its dashboard, showing you exactly where your AI systems remain vulnerable to attacks.

10. AttackIQ

AttackIQ addresses a fundamental challenge in traditional red teaming: the bottleneck created by limited time, tools, and personnel. The platform allows you to automate known attack scenarios and operationalize repeatable testing procedures, which frees your security experts to focus on novel threats and unknown vulnerabilities.

You can use AttackIQ to programmatically scale your red teaming operations beyond what manual testing allows. The platform shifts your approach from sporadic, expert-dependent assessments to continuous, automated validation of your security controls.

AttackIQ focuses on augmenting your existing red team capabilities rather than replacing human expertise. Your security professionals remain essential for interpreting results and investigating complex scenarios that automation cannot fully address.

The tool helps you establish a consistent testing cadence across your environment. You can validate that your defenses work as intended against known attack patterns while your team focuses on emerging threats. This division of labor between automated and manual testing makes your red teaming program more efficient and comprehensive.

Key Features of AI Red Teaming Tools

AI red teaming tools distinguish themselves through three critical capabilities: automated testing workflows that scale security assessments, flexible attack scenarios tailored to specific model vulnerabilities, and analytics systems that translate technical findings into actionable intelligence.

Automation Capabilities

Modern AI red teaming tools automate the generation and execution of adversarial attacks against your models. This automation allows you to test thousands of prompt variations, jailbreak attempts, and edge cases without manual intervention.

The best tools include scheduling features that enable continuous security assessments throughout your model's lifecycle. You can configure automated testing pipelines that integrate with your CI/CD workflows, ensuring every model update undergoes security validation before deployment.

Core automation features include:

- Batch testing across multiple attack vectors simultaneously

- Automated prompt generation using adversarial templates

- Scheduled scanning for regression testing

- API-driven testing for integration with existing workflows

Some platforms use AI agents to autonomously discover novel attack patterns, moving beyond static test libraries. This capability helps you identify zero-day vulnerabilities that manual testing might miss.

Customizable Attack Scenarios

You need the ability to tailor red teaming exercises to your specific use case and risk profile. Generic attacks won't expose vulnerabilities unique to your domain, whether you're testing a customer service chatbot or a code-generation model.

Quality tools provide libraries of prebuilt attack scenarios that span prompt injection, data poisoning, bias exploitation, and model inversion. You can modify these templates or create custom scenarios that reflect industry-specific threats.

Customization options typically include:

- Domain-specific test suites (healthcare, finance, legal)

- Adjustable severity thresholds for risk classification

- Custom policy definitions for compliance requirements

- Persona-based testing simulating different attacker profiles

The flexibility to combine multiple attack types in complex scenarios gives you a realistic assessment of how your model handles sophisticated threats.

Real-Time Reporting and Analytics

Effective red teaming tools transform raw test results into clear security insights you can act on immediately. Real-time dashboards show you which attacks succeeded, the severity of discovered vulnerabilities, and recommended remediation steps.

Your analytics platform should categorize findings by risk level, attack type, and affected model components. This organization helps you prioritize fixes based on actual impact rather than theoretical concerns.

Essential reporting capabilities include:

| Feature | Purpose |

|---|---|

| Risk scoring | Quantifies vulnerability severity |

| Trend analysis | Tracks security posture over time |

| Compliance mapping | Links findings to regulatory requirements |

| Audit trails | Documents all testing activities |

The best tools generate executive summaries for stakeholders while providing technical teams with detailed logs, reproduction steps, and proof-of-concept exploits. This dual-level reporting ensures that everyone, from security engineers to leadership, understands your AI security posture.

Implementation Considerations

Deploying AI red teaming tools requires careful planning around technical integration and regulatory requirements. Your existing security workflows and compliance obligations will shape how you implement these solutions.

Integration with Existing Security Infrastructure

You need to evaluate how AI red-teaming tools integrate with your current security stack. Most enterprise solutions offer API integrations with SIEM platforms, vulnerability management systems, and CI/CD pipelines to automate testing workflows.

Look for tools that support your existing authentication mechanisms, such as SAML or OAuth. This ensures your team can access red teaming platforms without creating separate credential management processes.

Your tool should generate reports in formats compatible with your security dashboards. Standard outputs include JSON, CSV, and SAST/DAST formats that feed directly into tools such as Splunk, Datadog, and Azure Security Center.

Consider deployment models that match your infrastructure. Cloud-based solutions offer faster setup, while on-premises or hybrid options provide greater control for organizations with strict data residency requirements. Container-based deployments using Docker or Kubernetes enable you to scale testing across multiple environments.

Compliance and Data Privacy

You must ensure your red teaming activities comply with regulations such as GDPR and CCPA, as well as industry-specific frameworks such as HIPAA and PCI DSS. Many tools now include built-in compliance checks aligned with the OWASP Top 10 for LLMs and the NIST AI Risk Management Framework.

Data handling becomes critical when testing AI models with sensitive information. Choose tools that offer data anonymization, synthetic data generation, or secure enclave testing to prevent exposure of production data during adversarial testing.

Your organization may need audit trails that document every test performed, including timestamps, test parameters, and results. This documentation proves due diligence to regulators and supports incident response if vulnerabilities are exploited.

Check whether your tool vendor maintains SOC 2 Type II certification or ISO 27001 compliance. These certifications confirm that the platform adheres to security best practices for handling your AI assets and test results.

Conclusion

AI red teaming is no longer optional for organizations deploying advanced models in production. As AI systems expand across enterprise workflows, customer-facing applications, and autonomous processes, the attack surface grows alongside them.

The tools covered in this guide offer different approaches, from continuous automated testing to open-source prompt injection frameworks and breach simulation platforms. The right choice depends on your infrastructure, risk tolerance, compliance obligations, and maturity level.

Ultimately, effective AI security requires more than a single tool. It demands continuous testing, integration with existing security workflows, and a proactive mindset. Organizations that embed red teaming into their AI lifecycle will be better positioned to identify vulnerabilities early, protect users, and maintain trust in an increasingly AI-driven world.

Featured Image generated by Google Gemini.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment