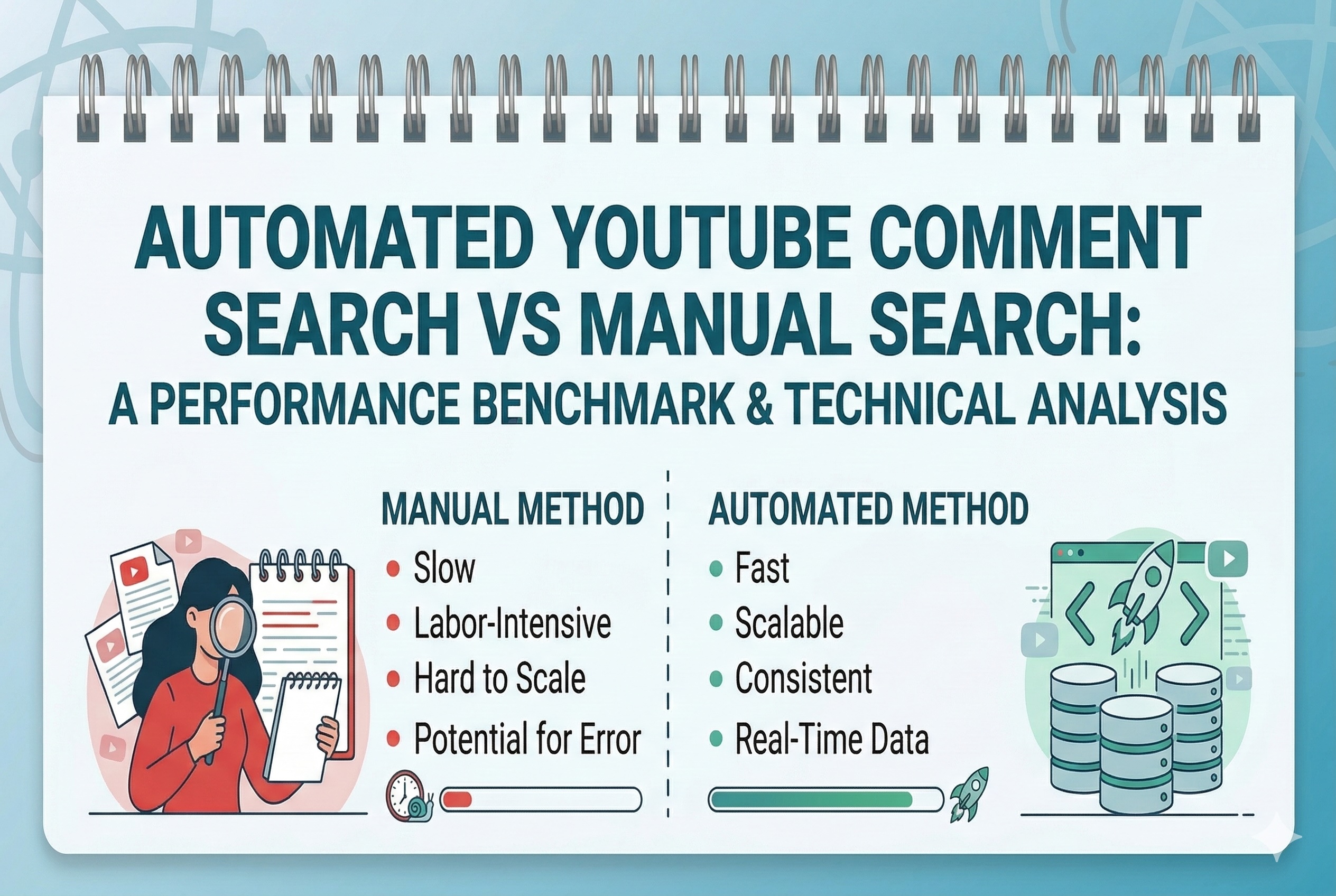

With the era of marketing on data and research of audiences, YouTube comments have become a treasure trove of untouched consumer feedback. They have actual questions, candid feedback, repeated complaints, and spontaneous zeal which can never be imitated by the focus group. However, it is not an easy task to glean any meaningful insights out of millions of comments that are distributed across thousands of videos - and the approach you use in order to approach that challenge will make all the difference. This paper compares two methods on a head-to-head basis: automated YouTube comment search and manual search as well as comparing them in terms of speed, accuracy, scalability, cost, and practical application.

Why YouTube Comment Search Matters

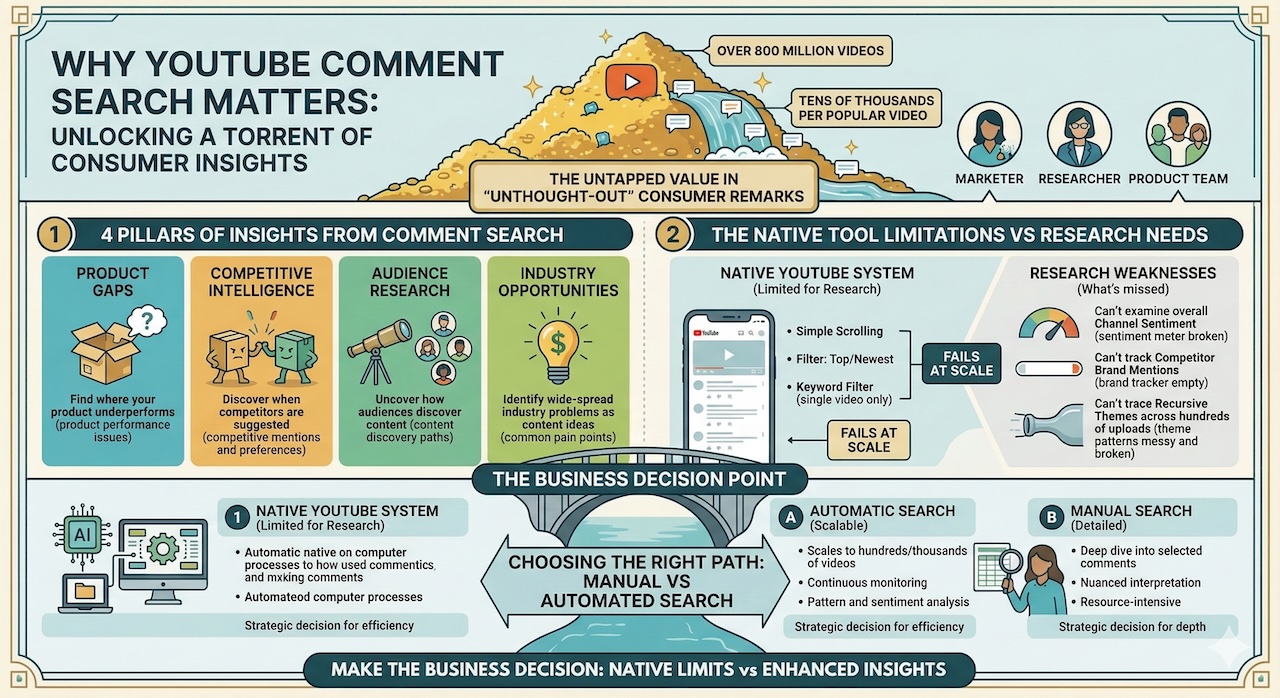

It would be worthwhile to first set up the reason why marketers, researchers and product teams even bother to search YouTube comments.

There are more than 800 million videos on YouTube and a popular video can be commented on by tens of thousands of people. There we find within those remarks a running torrent of unthought-out consumerism. One wonders why a product does not perform in a particular way - that is a product gap. A comment section suggests that someone has suggested a competitor, that is competitive intelligence. Someone tells how they discovered the video in the exact way, that is audience research. One complains about a problem that is industry wide - that is an opportunity of content.

The problem is that the native comment system used in YouTube is not designed to aid in research. You are allowed to scroll, filter by top comments or newest and simple keyword filtering on a video. However, as soon as you have to examine sentiment in a channel overall, track the brand mentions in the videos of your competitors, or trace the themes that recur in hundreds of uploads, the native interface quickly demonstrates its weaknesses.

This is where the automatic and manual search option is a true business decision.

Defining the Two Approaches

Manual search is when a human researcher uses the direct interface of YouTube searching by browsing through comments on videos and using the sort and filter feature inbuilt in YouTube and note down important findings on a spreadsheet or a document. It can also consist of utilizing comment management tools of YouTube Studio when the channel owners will view their own posts.

Automated search Auto-searching is the application of third-party devices, APIs, or a written script, usually based on the YouTube Data API, to programmatically extract, filter, rank, and examine comments at scale. The tools in this category include purpose-built applications such as Brandwatch, Mention, and TubeBuddy as well as Python scripts that query the YouTube Data API directly and perform key-word matching or sentiment analysis on the responses.

Benchmark Category 1: Speed

It is the most glaring point of difference between the two approaches and is arguably the most time sensitive work resulting.

A qualified human investigator will be capable of reading and evaluating around 200 to 300 remarks during a concentrated session. On a video with 5,000 comments, that is between 17 and 25 hours of work on one video - even before any synthesis or reporting. Scaling that to a campaign monitoring 10 competitor videos a week and manual search is practically operationally unsustainable after barely a week.

The automated systems are working on a whole new plane. The YouTube Data API is capable of delivering at least 100 comments at once to a request, and a carefully coded script can make hundreds of requests within a few minutes and retrieve thousands of comments, which a human researcher can accomplish by opening a spreadsheet. An average automation pipeline has the capacity of gathering, sifting and grouping 50,000 comments within less than one hour. On some commercial platforms, this volume can be processed in-the-fly, so that a match on keywords is signalled as a comment is added.

Verdict: Automation in a landslide. In any study that relies on a large amount of comments on more than one or two videos, a manual search will not be able to compete in terms of speed.

Benchmark Category 2: Accuracy and Contextual Understanding

It does not matter how quick it is when the results are incorrect or misleading and it is in this area where manual search regains a lot of ground.

Context is intuitively known to human readers. A trained researcher is likely to process sarcasm, irony, cultural references, humor, and ambiguity. A human reads frustration when he or she says Oh great, another product that breaks in two weeks. Most automated systems, especially simple keyword matching systems as opposed to sophisticated natural language processing systems, would consider this comment a positive comment as it does not include any explicitly negative keywords.

Sentiment analysis to the task of the automated evaluation of the commentary as either positive, negative or neutral is an imperfect science. The accuracy of most commercial sentiment tools ranges between 70 and 85 percent on overall social text, and YouTube comments, with their informal language, abbreviations, slang, and mixing of languages are at one extreme of the spectrum. Even the error of 15-30 per cent on tens of thousands of comments can significantly change the research conclusions unless this error is considered.

Manual search, in its turn, has almost perfect contextual accuracy. A skilled researcher reading 300 comments will accurately categorize sentiment and get subtle meaning at a rate that cannot be compared to any existing automated system.

The practical implication of this is the following: automated search is the best at searching through the comments that feature particular keywords or phrases, but it often fails to get what the comments actually state.

Final ruling: Manual search is more accurate and rich in context. Automation is satisfactory when it comes to the superficial keyword discovery, but only takes a human verification of the results, particularly when a sentiment-guided study is concerned.

Benchmark Category 3: Scalability

This is a virtually one-sided category.

Manual search does not scale. Each extra video that you are planning to follow, each extra keyword, each extra channel you are looking to track, costs you the number of human hours correspondingly. Doing more of it does not give efficiency benefit, in fact, fatigue and declining attention means that quality tends to decrease with volume. A researcher, who will be able to accurately evaluate 300 comments during the first month of work, will evaluate lesser, and less accurately, during the fourth hour of work.

The horizontal expansion of automation is practically linear, and the quality does not decrease. It is almost identical to the cost of computation of each comment regardless of whether you are searching 100 comments or 10 million comments. A two-minute search query could require four minutes to run when the dataset contains 100,000 comments as opposed to two minutes in a dataset containing 1,000 comments. A change in configuration, adding new channels, or timeframes to an automated pipeline is not a staffing decision.

In enterprise level applications - such as brand monitoring on a sector-wide scale, competitive intelligence monitoring hundreds of channels concurrently, or longitudinal research monitoring sentiment of comments over a period of months - automation is not only desirable. It is the only viable option.

Sentencing: Automation is the clear winner. Automation does not come anywhere close to the hard ceiling of manual search.

Benchmark Category 4: Cost

The cost analysis is reliant on the level and frequency of the research requirement.

Manual search is cheaper when dealing with rare and small-scale research in the form of a review of comments on five to ten videos after every quarter. It does not need any software subscriptions, the establishment of an API, technical skills, or maintenance. A novice researcher taking a couple of hours to do this task is much cheaper than paying a commercial comment analysis system.

The cost equation however switches around quickly with scale. When the research volume is moderate, such as weekly searching through 30 or 50 videos on several channels, the number of human hours spent searching manually will start to surpass the price of the average automation solutions. The financial argument of automation is overwhelming at the level of enterprise. Manual search time at that size would involve a variety of full-time researchers and an automated pipeline with cloud infrastructure could cost a fraction of the salary.

One should not also neglect another hidden cost of manual search opportunity cost. When trained analysts are wasting their hours scrolling on comment boxes, they are not wasting their hours on decoding findings, developing strategy or creating knowledge.

Benchmark Category 5: Depth of Insight

Ironically, the process that uses less data can be used to gain deeper insight, when done right.

Slowness and focus of manual search makes it an oftentimes effective approach in terms of uncovering the nature of the subtle, surprising discovery that a keyword filter cannot. A comment reader may become aware of the trend in the form in which a viewer poses a specific question, will identify an emerging trend before it has reached the necessary volume to be statistically significant, or will find a single, exceptionally detailed comment that will redefine an entire research hypothesis. To a great research value, human curiosity and associative thinking are both useful research tools.

Automated search is efficient in searching what you are already aware is being searched. To find out how many comments about competitor videos have references to poor customer service, automation will respond to you quicker and more completely than any human researcher would have. However, unless you are sure about what you are searching, automation with the help of a keyword cannot find it. This limitation is partially resolved by unsupervised machine learning methods, such as clustering algorithms, which combine comments based on theme without using a set of keywords, but they are technically complicated and are not commonly available to most marketing departments.

Conclusion: Manual search generates more exploratory knowledge. Automation is good in confirmatory and quantitative studies.

The Hybrid Approach: Best of Both Worlds

Practically, the most successful research teams do not decide what methods should be used in advance, they have them sequenced.

To select and filter at scale, thousands of relevant comments are gathered and filtered with automation to eliminate the obvious noise. A human researcher then reads a structured sample, i.e. the top 200-300 comments filtered by engagement, recency or keyword relevance, to confirm results, identify false identifications, and humans may lose a deeper qualitative insight that the algorithm overlooked. Results are subsequently presented with an automated statistical support of the automated data and qualitative texture provided by the manual inspection.

This hybrid solution will deliver the speed and scalability of automation and retain the contextual intelligence that is only available to human judgment.

Final Verdict

Both techniques do not necessarily outperform each other. The manual search is still useful in small-scale, exploratory or nuance-dependent research where the contextual accuracy is the most important. The automated search cannot be done without any research that works in large volume, needs to be fast, or needs to work over long periods of time.

The question is not to many modern marketing and research teams, whether to automate the search of YouTube comments, but how to automate it effectively, and on what to do with the rest of the value, which is mined by automation. Let yourself build that workflow, and YouTube comment sections cease to be noise, and begin being one of the richest real-time sources of research that your business can have.

Featured Image generated by Google Gemini.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment