When organizations attempt to optimize website performance, attention often centers on application code, databases, or frontend optimizations. In practice, however, the first delay many users experience occurs earlier—at the network layer.

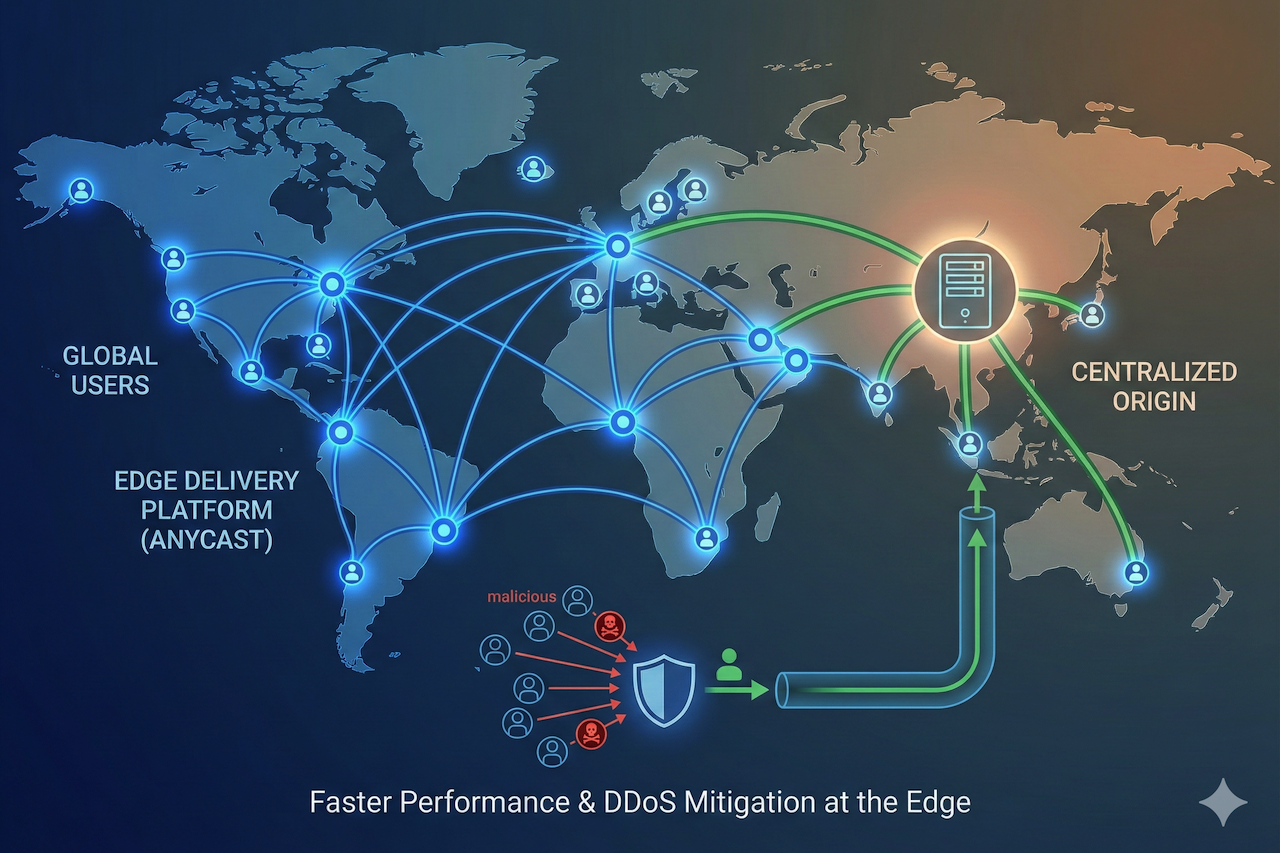

Edge delivery platforms built on secure content delivery network (CDN) principles address this by moving traffic exchange points closer to users, rather than routing every request to a centralized origin. Delivery, inspection, and security controls are applied at the edge, before application infrastructure is involved.

Platforms such as Trafficmind follow this model by using Anycast routing to direct users to a nearby point of presence. At that edge location, requests can be cached, inspected, filtered, and forwarded to the origin only when necessary.

Anycast Routing Explained

When a secure edge delivery service uses Anycast routing, users do not connect to a single server or region. Instead, the same IP address prefix is announced from multiple edge locations simultaneously. Internet routing protocols, primarily BGP, guide traffic to the edge location that appears closest or best connected from the user’s network perspective.

This does not guarantee strict geographic proximity, but well-peered networks typically produce shorter and more stable paths. As a result, requests often reach an edge location quickly, without traversing long-haul or congested routes.

The performance impact generally comes from several factors:

| Mechanism | What Changes with Anycast | Practical Result |

|---|---|---|

| Shorter round-trip time (RTT) | Fewer hops before reaching the first edge | Faster initial responses for global users |

| Faster connection setup | TCP and TLS handshakes terminate at a nearby edge | Lower TTFB and quicker page or API starts |

| Lower variance | Reduced exposure to long-distance congestion | More consistent performance during traffic peaks |

From a client perspective, multiple physical locations effectively behave as a single nearby network entry point.

Why a Distributed Edge Helps With DDoS Mitigation

Anycast is often discussed in terms of performance, but it also changes how attacks affect infrastructure. When traffic is sent directly to a single origin region, even moderate abuse can consume resources intended for legitimate users.

By terminating traffic at the edge, platforms can absorb and filter malicious requests before they reach systems that are more expensive and difficult to scale.

Without an edge layer, attack traffic can strain infrastructure in several ways:

- Upstream network capacity becomes saturated, reducing availability for real users.

- Compute resources are consumed by TLS handshakes and request parsing, even when traffic is malicious.

- Application and database layers are forced to process high-rate or malformed requests, increasing latency and error rates.

Filtering traffic at the edge avoids these costs at the origin.

Example: An attacker floods an API authentication endpoint with high-rate, randomized requests. Allowing that traffic to reach the origin triggers TLS termination, application execution, and database lookups. Dropping it at the edge prevents those processes from occurring at all.

How Edge Platforms Process Traffic

For edge delivery platforms to remain effective under load, delivery and security logic must execute close to where traffic enters the network, with predictable behavior during spikes. Many modern platforms are designed around this principle.

Unified Request Handling

Some platforms implement TLS termination and request inspection within a single compiled request pipeline. This reduces internal handoffs between decryption and allow/block decisions, improving efficiency under load.

Layer 4 Filtering for Volumetric Traffic

Volumetric attacks are often addressed at Layer 4, using packet- or header-level enforcement. By blocking abusive traffic before it is processed as HTTP, edge platforms can preserve CPU and memory resources at higher layers.

Layer 7 Analysis for Application-Layer Abuse

Application-layer attacks often appear protocol-compliant, requiring behavioral analysis to identify abuse. High-throughput telemetry systems and real-time aggregation are commonly used to analyze request rates, paths, payload characteristics, and client fingerprints. Once abnormal patterns are identified, enforcement actions can be applied.

Some platforms avoid interactive challenges or client-side execution, which can be beneficial for API-driven traffic where CAPTCHAs or JavaScript challenges may reduce reliability.

Caching and Origin Isolation

Reducing latency is only part of the edge delivery equation. How often requests are answered at the edge determines how much load reaches the origin.

Beyond progressive caching, where the first request is forwarded upstream and cached, some platforms support pre-warming and replication across edge locations. This allows content to be served immediately during demand spikes without first pulling data from the origin.

Operationally, this approach can result in:

- Smoother traffic surges, as more responses are served at the edge

- Immediate availability of frequently accessed content

- Reduced coupling between edge demand and origin capacity

Network Quality and Peering Considerations

Anycast performance depends heavily on routing quality, not just the number of edge locations. Direct peering with major carriers and internet exchanges helps keep paths short and predictable. Some platforms emphasize commercial-only traffic segregation to ensure inspection capacity remains stable regardless of traffic conditions.

Where Edge Platforms Fit in Modern Architectures

Edge delivery platforms typically sit in front of applications or APIs as the first ingress point, forwarding clean traffic to existing load balancers or gateways. They are often designed for engineering-led integration, with security logic adapted to application behavior rather than relying on generic rules.

From a governance standpoint, platform operators may also be subject to regional data protection laws, which influence how traffic data is processed, retained, and disclosed.

Key Takeaways

- Anycast routing shortens network paths to edge delivery platforms, reducing latency and stabilizing connection setup for global users.

- Distributed ingress makes it easier to absorb and filter DDoS traffic before it reaches origin systems.

- Combining TLS termination, inspection, caching, and enforcement at the edge reduces origin load and improves response consistency.

- Pre-warmed and replicated caching improves both average performance and stability during traffic spikes.

Conclusion

When evaluating an edge delivery or security platform, a practical question is whether the edge reliably becomes the first place traffic is terminated, inspected, and often served. Doing so allows origin resources to remain focused on legitimate application workloads rather than network-level noise.

Featured Image generated by Google Gemini.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment