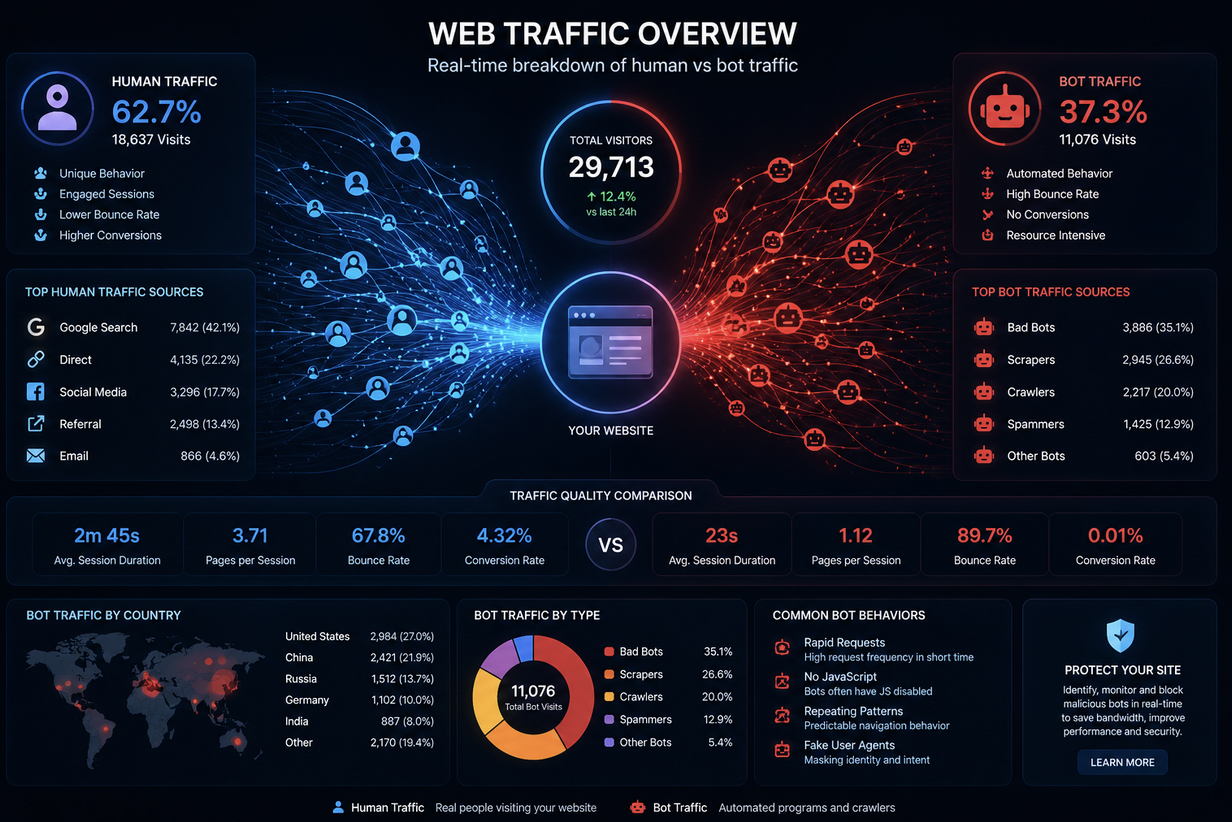

Every website owner obsesses over traffic. More visitors, better rankings, higher conversions. But there is a question that rarely gets asked loudly enough: how much of that traffic is actually human?

For most websites, the answer is genuinely unsettling.

A significant portion of internet traffic at any given moment is generated not by curious people browsing the web, but by automated programs designed to crawl, scrape, click, or exploit. Understanding this reality is not about inducing panic. It is about making smarter decisions with your data, your budget, and the security of everything you have built online.

Why So Much Traffic Is Not What It Seems

When someone visits your website, their browser sends a request to your server carrying an IP address, a user agent string, and behavioral signals. A human browsing naturally will scroll, pause, click around, and spend time reading.

A bot moves differently. It loads pages in milliseconds, follows patterns without deviation, and often ignores the visual elements that real people gravitate toward.

Not all bots are malicious, to be fair. Search engine crawlers are bots. Website monitoring tools send automated pings. Legitimate services use bots to check uptime, index content, or verify integrations. The web could not function the way it does without some level of automation.

The problem is the bots that operate with harmful intent.

These include scrapers that steal your content the moment it goes live, credential stuffers that hammer login pages with stolen username-and-password combinations, click-fraud bots that drain advertising budgets by triggering fake ad clicks, and vulnerability scanners that probe your site for weaknesses. These are not edge cases. They represent a constant background hum of hostile activity that most website owners never directly see.

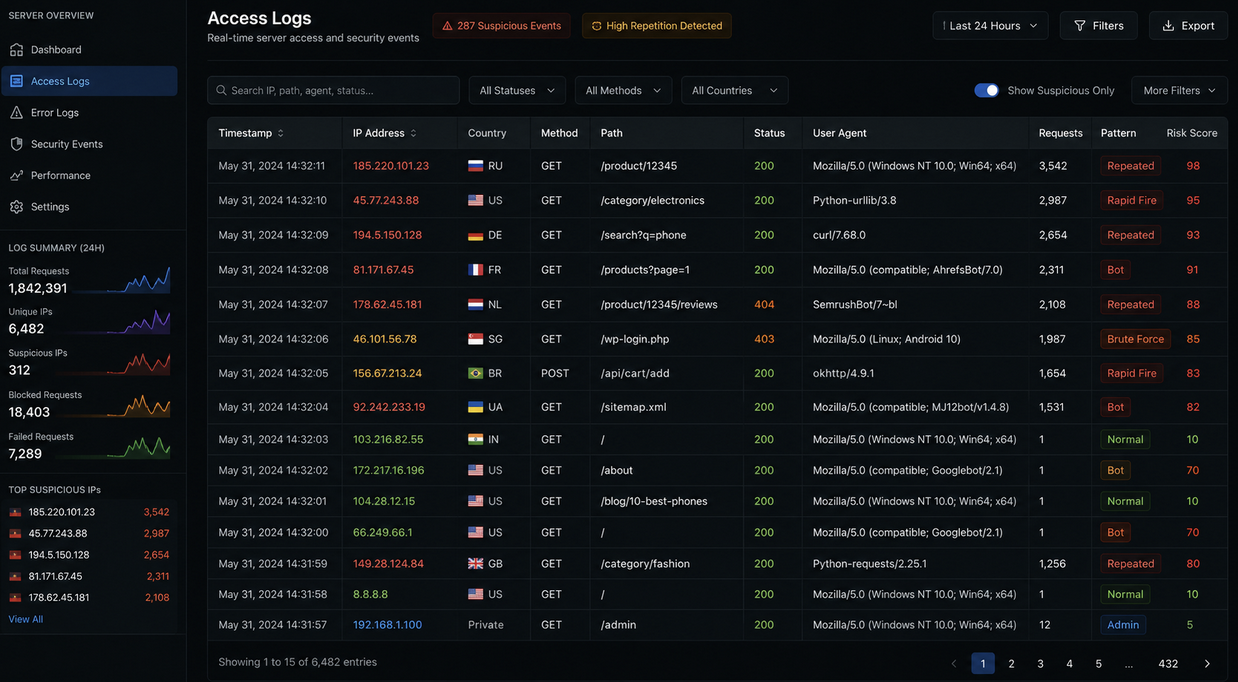

One of the most useful first steps is understanding the origin and nature of the IP addresses making requests to your server. Tools that let you look up IP address details can reveal whether a given address belongs to a data center, a known proxy network, or a residential connection. This kind of visibility is surprisingly eye-opening when you start reviewing your server logs with fresh eyes.

The Real Cost of Ignoring Bot Traffic

Many businesses assume that because they have not been "hacked" in any obvious sense, they are fine. But bot-related damage does not always look like a breach. It often looks like a slow, quiet erosion of the things that matter most.

Ad spend disappears into thin air. When bots click paid search or display ads, they consume budget without any chance of conversion. Without proper monitoring, that money evaporates month after month, and most advertisers never connect the dots.

Website performance takes a hit too. Automated traffic consumes server resources just like real traffic does. If a significant portion of your load is from bots, you are essentially paying to serve traffic that will never generate a cent in revenue.

Analytics become unreliable. Decisions about content strategy, user experience, and marketing campaigns are grounded in traffic data. If that data is polluted with bot visits, every insight you draw from it is built on a flawed foundation. A landing page might appear underperforming when it is actually being flooded with automated noise.

Customer account security is another area that takes a beating. Credential stuffing attacks specifically target login systems. Bots use massive lists of leaked username-and-password combinations and try them systematically against your login page. Even if your platform was not the source of the original breach, your users can still end up compromised.

What Effective Bot Defense Actually Looks Like

Blocking bad bots sounds simple in theory. In practice, it requires a layered approach that distinguishes between different types of automated traffic without accidentally blocking legitimate visitors or search crawlers.

Basic CAPTCHA challenges were once considered sufficient. They are not anymore. Sophisticated bots can solve traditional image-based challenges, and even audio CAPTCHA has been defeated by machine-learning tools. The arms race between bot operators and defenders has accelerated considerably.

Modern platforms like CHEQ offer bot management that takes a fundamentally different approach. Rather than relying on a single challenge mechanism, they analyze behavioral signals in real time. How does the visitor move through the page? Does the timing of actions match human cognitive patterns? Does the device fingerprint align with the declared browser type? Is the IP address associated with known bot infrastructure?

These signals, analyzed together, allow platforms to identify and respond to automated traffic with a precision that simple blocklists could never achieve.

The best solutions operate invisibly to legitimate users. A real customer browsing your store or signing up for your newsletter experiences no friction whatsoever. The filtering happens in the background, and problematic traffic is blocked or rate-limited before it ever touches the parts of your infrastructure that matter.

For businesses running paid advertising campaigns, this kind of proactive protection is not a luxury. It is a core business requirement. Every fake click that gets filtered out before it charges your account is money saved. Over a full campaign cycle, the savings can far outweigh the cost of the protection itself.

Building a Smarter, More Resilient Web Presence

Taking bot traffic seriously is ultimately about taking your business seriously. When you have clean, reliable data, every decision you make becomes more defensible. When your ad spend reaches real humans, your return on investment actually reflects reality.

The practical starting point does not require a massive overhaul.

Begin by reviewing your server logs and analytics platform for unusual activity. Sudden spikes in traffic that do not correspond to campaigns or press mentions are worth investigating. High bounce rates combined with suspiciously short session durations can indicate bot visits. Large volumes of failed login attempts should never be dismissed as background noise.

From there, layering in IP intelligence tools helps you understand network-level traffic. Knowing whether requests are coming from residential addresses, known data centers, or flagged proxy services changes the way you respond to them. Some may warrant blocking outright. Others might be throttled or challenged. The goal is surgical precision, not a sledgehammer approach that catches real visitors in the crossfire.

Combining network-level intelligence with behavioral analysis gives you a defense posture that adapts over time. Bots evolve. The tactics that worked for attackers recently may be obsolete today, but new techniques emerge constantly. A defense strategy built around real-time signal analysis stays relevant because it responds to behavior rather than fixed signatures.

The internet is not getting simpler. The volume of automated traffic is growing, the sophistication of bad actors is increasing, and the stakes for businesses operating online are higher than ever. But the tools available to defend against these threats have matured considerably.

Website owners who take the time to understand the problem and implement thoughtful defenses will find themselves with a measurable, compounding advantage over those who hope for the best.

Your traffic numbers deserve to mean something. Make sure they do.

Conclusion

Bot traffic is no longer a niche technical issue affecting only large enterprises. It impacts businesses of every size, quietly distorting analytics, consuming infrastructure resources, undermining advertising campaigns, and increasing security risks. As automated traffic continues to grow in sophistication and volume, website owners need visibility into who, or what, is interacting with their platforms.

By combining server log monitoring, IP intelligence, behavioral analysis, and modern bot-management tools, organizations can reduce fraudulent traffic while preserving a smooth experience for legitimate users. The goal is not to block automation entirely, but to separate useful activity from malicious or deceptive behavior so your metrics, infrastructure, and marketing investments reflect reality.

Featured Image generated by ChatGPT.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment