Many businesses today run vulnerability assessments and penetration tests. The goal is sound: identify weaknesses before attackers do and reduce risk proactively. Even though they test their systems on a regular basis, many companies still experience breaches, find the same problems over and over, and take a long time to resolve them.

The issue is rarely the absence of testing. It is the way the VAPT (Vulnerability Assessment and Penetration Testing) process is implemented. In many cases, the process becomes a routine compliance task instead of a useful security measure. Reports are generated, vulnerabilities are recorded, and fixes are delayed or partially applied, only for the same issues to appear in the next cycle.

This blog talks about the VAPT process that most firms use, why it doesn't always lower risk, and what security leaders need to rethink to make VAPT effective.

What the Typical VAPT Process Looks Like

On paper, the VAPT process looks organized and logical.

Most businesses follow a familiar pattern:

- Define scope for testing

- Run automated vulnerability scans

- Perform limited manual exploitation

- Generate a report with risk ratings

- Share findings with remediation teams

This VAPT process meets the requirements for an audit and creates the appearance of proactive security. But effectiveness doesn't depend on the steps themselves, it depends on how they are executed and operationalized.

Why Scoping Decisions Undermine the VAPT Process

People often think of scoping as an administrative step instead of a strategic one.

Some common problems with scoping are:

- Excluding “sensitive” systems to avoid disruption

- Focusing only on internet-facing assets

- Ignoring identity, cloud, and internal attack paths

- Reusing outdated scopes year after year

When scope does not reflect real attacker behavior, the VAPT process tests what is convenient rather than what is risky.

Overreliance on Automation

Automation is important, but it is often misused.

In many organizations, the VAPT process relies heavily on automated scanners that:

- Identify known vulnerabilities

- Generate large volumes of findings

- Lack context on exploitability or impact

This creates two problems. First, teams are overwhelmed by low-value issues. Second, more subtle weaknesses like logic flaws, chained attacks, and identity abuse remain undiscovered. Automation without context makes the VAPT process weaker instead of strengthening it.

Reports That Describe Problems but Do Not Drive Action

VAPT reports are often detailed but not very helpful.

Some common report challenges include:

- Long lists of vulnerabilities with little prioritization

- Technical descriptions without business impact

- Generic remediation advice

- No clear ownership or deadlines

As a result, findings are acknowledged but not fixed. When the same issues keep coming up, people lose confidence in the VAPT process itself.

The Remediation Gap No One Owns

Most VAPT projects fail at the remediation stage.

After the tests are over:

- Findings are handed to engineering teams

- Fixes compete with feature delivery

- Security teams lack enforcement authority

- Retesting is delayed or skipped

Without accountability, vulnerabilities linger. A VAPT process that does not include structured remediation, and validation is incomplete by design.

Why Retesting is Often Skipped

Retesting is very important, but it is frequently ignored.

Some common reasons are:

- Budget constraints

- Time pressure before audits

- Assumptions that fixes were applied correctly

If you don't retest, you won't know for sure if the vulnerabilities were really fixed. This is one of the most damaging weaknesses in the typical VAPT process, as it creates false confidence.

Misalignment Between VAPT And Real Attack Paths

VAPT often focuses on isolated weaknesses instead of attacker objectives.

Attackers don't exploit vulnerabilities in isolation. They:

- Chain low-severity issues

- Abuse identity and misconfigurations

- Move laterally after initial access

A VAPT process that doesn't consider attack paths provides limited information about real-world risk.

Treating VAPT as a Compliance Exercise

Mindset is one of the most common reasons why the VAPT process fails.

When VAPT is driven primarily by compliance:

- Testing becomes predictable

- Findings are minimized rather than resolved

- Security improvement stalls

Compliance may require VAPT, but compliance alone does not equal resilience. Firms that treat VAPT as a checkbox rarely see meaningful security gains.

Lack of Integration with Security Operations

VAPT findings often exist separately from day-to-day security operations.

This disconnect means:

- SOC teams do not learn from VAPT results

- Detection gaps remain untested

- Incident response playbooks are not validated

An effective VAPT process should inform detection and response, not operate in isolation.

What a More Effective VAPT Process Looks Like

Organizations that succeed with VAPT take a different approach.

A well-established VAPT process typically includes:

- Risk-based scoping aligned with attacker behavior

- Balanced use of automation and manual testing

- Clear prioritization tied to business impact

- Structured remediation workflows

- Mandatory retesting to validate fixes

- Feedback loops into SOC and AppSec teams

This approach shifts VAPT from periodic testing to continuous improvement.

When Organizations Should Rethink Their VAPT Approach

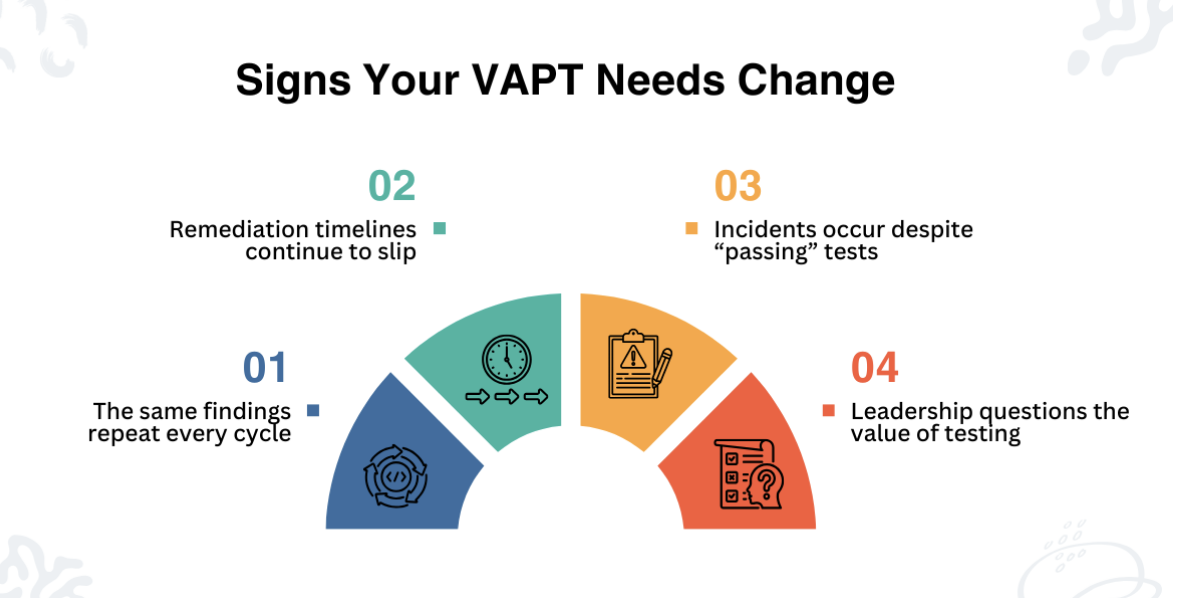

It is time to reassess the VAPT process when:

- The same findings repeat every cycle

- Remediation timelines continue to slip

- Incidents occur despite “passing” tests

- Leadership questions the value of testing

These signals indicate a process problem, not a tooling problem.

What Organizations Should Reevaluate

Companies that want to get better outcomes should start by evaluating how their VAPT process is carried out, not just whether it exists. Reviewing scoping decisions, remediation ownership, and retesting practices often reveals where value is being lost.

Some organizations work with external security providers to improve how their VAPT programs are executed. For example, CERT-In empanelled firms such as CyberNX focus on aligning testing with real attack paths and ensuring remediation and retesting are part of the process. This type of engagement highlights how VAPT can move beyond compliance toward measurable risk reduction.

Conclusion

The VAPT process most organizations follow is familiar, repeatable, and often ineffective. The failure is not in testing itself, but in how testing is scoped, interpreted, and acted upon.

A VAPT process that prioritizes realism, accountability, and validation is far more useful than one based on schedules and audits. As attackers keep changing, businesses must adapt their VAPT approach from routine assessment to continuous security improvement.

When done right, the VAPT process not only finds vulnerabilities, it also reduces risk.

Disclaimer

This article is provided for informational and educational purposes only and does not constitute legal, security, or professional advice. While reasonable efforts are made to ensure accuracy, iplocation.net makes no representations or warranties regarding the completeness, reliability, or suitability of the information presented.

Any references to third-party tools, services, or websites are provided for context only. iplocation.net does not endorse, control, or assume responsibility for the content, accuracy, or availability of external links. Accessing third-party websites is done at the reader’s own discretion and risk.

Readers should consult qualified security, legal, or compliance professionals before making decisions based on the information in this article. iplocation.net shall not be held liable for any loss, damage, or consequences arising from the use or reliance on the content or linked resources.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment