Web anti-bot systems have become increasingly sophisticated, evaluating not only how traffic behaves but also where it comes from. Simply using the fastest datacenter proxies will often trigger blocks, while relying exclusively on slower residential IPs can be cost-prohibitive.

The key is to balance performance and trust. Modern proxy strategies route easy traffic through cheap datacenter IPs and switch to clean residential or ISP-based proxies for sensitive or protected endpoints. In practice, this means using datacenter proxies for bulk scraping and reserving high-trust, ISP-registered addresses for login flows, checkouts, or targets with strong anti-bot defenses.

In this article, we explain how IP reputation scores are built (via ASNs, blocklists, and behavioral signals), why datacenter IPs tend to burn out quickly, and how hybrid proxy architectures can maintain high success rates. We’ll also cover common mistakes and real-world use cases, and look ahead to how AI and device fingerprinting are changing the game (hint: IPs alone won’t win the future).

What Is IP Reputation?

IP reputation is essentially a trust score for an IP address or range based on historical behavior. In the simplest terms, it answers: “Have we seen this IP behaving badly in the past?” If an IP has been used for spam, abuse, credential stuffing, or bot traffic before, its reputation goes down.

In practical terms, web platforms and security vendors aggregate many such data points to determine whether a given IP address appears legitimate. A barrage of concurrent requests from an AWS range, for example, will lower trust; a dynamically changing IP that only appears briefly may be suspicious if it lines up with bot-like behavior.

Some key signals that feed into an IP’s reputation include known blocklist listings (spam, malware, TOR exit nodes), traffic volume spikes, geographic anomalies, and Autonomous System Number (ASN) ownership. Patterns such as repeated login attempts or credential stuffing attacks can significantly impact how an IP is evaluated.

IPs belonging to well-known cloud providers (AWS, Google Cloud, Azure) often inherit a neutral or low trust by default, because cloud ASNs are commonly used for automation. In other words, many hosting providers' IPs carry a negative bias. By contrast, consumer ISP IPs (assigned to home routers or mobile devices) tend to have higher baseline trust because they appear to be “real user” traffic.

Websites and bot-management systems constantly update reputation scores. They watch for patterns such as repeated failed login attempts from a single IP address or a history of security challenges. Over time, if an IP (or its subnet) is seen in attacks, its score drops, and future requests may trigger tougher checks (CAPTCHA, blocks, MFA, etc.).

The Practice of Scoring IPs Is Dynamic

An IP might start fresh, pick up a bad mark, and gradually recover if it goes unused for a while. If the same IP moves in lockstep across many endpoints, or if a set of IPs (like those in a proxy pool) constantly refresh their addresses, that pattern can also degrade trust even if no explicit blocklist exists.

Why Datacenter IPs Burn Faster

Datacenter proxies remain extremely popular because they are cheap, fast, and easy to scale. A single high-powered server in a colocation facility can host hundreds of virtual proxies, making 1,000+ IPs very affordable. These IPs offer very low latency and high bandwidth, which is ideal for bulk scraping, SEO rank tracking, QA testing, and other volume-oriented tasks.

However, those same properties make datacenter IPs easy to detect and blocklist. The IPs come from contiguous address blocks owned by hosting companies. When an IP address in that block “misbehaves,” a website or CDN can simply block the entire subnet.

Furthermore, datacenter proxies often move traffic in obviously automated ways. Many bots on datacenter IPs make massive numbers of concurrent requests or have highly regular timing. Anti-bot systems have learned to spot these patterns.

Why Residential and ISP Proxies Carry More Trust

In contrast, residential proxies route traffic through IP addresses given to home or mobile internet subscribers by ISPs. These addresses appear legitimate for targeting sites. A home-user IP address is embedded in the target’s Geo-IP and ASN databases as belonging to, say, “Comcast Inc.” or “British Telecom”. When your request comes from such an IP, it naturally inherits the trust of an ordinary user.

Residential IPs blend in with real traffic. Sites will treat them as genuine consumers, so you encounter fewer outright blocks and CAPTCHA. They also allow finer geo-targeting down to city or ZIP code (since they come from actual home ISP assignments). However, this trust comes at a price.

Quality residential proxies are typically slower (limited by a home connection) and are expensive, often charged per gigabyte of use. As one analysis notes, premium residential bandwidth can cost 4–10× as much as a datacenter IP. The latency is also higher, since your traffic might be bouncing through consumer routing.

A newer hybrid category, often called ISP proxies or static residential proxies, aims to bridge these worlds. These proxies use data center infrastructure to provide stability and speed, but their IPs are formally registered with real ISPs. In other words, they are datacenter-hosted but ISP-registered.

Beyond IP: Modern Anti-Bot Signals

Increasingly, platforms consider far more than just IP reputation. Simply using a “trusted” IP is not enough if other signals scream “automation”. Today’s bot mitigation systems (DataDome, Akamai Bot Manager, Cloudflare Bot Management, PerimeterX, etc.) inspect multiple layers of each request. These include browser and network fingerprints, request headers, TLS details, session continuity, cookies, and actual user behavior on the page.

Blending datacenter and residential proxies improves success, but you also have to blend other signals. A hybrid proxy strategy only works if your browser automation matches a real user at each step. This includes randomizing header order, enabling HTTP/2, maintaining cookie sessions, and even imitating human-like wait times and navigation. As a rule of thumb, each page view should look unique and organic, not bot-like.

Hybrid Proxy Routing in Practice

Given these insights, how can one use proxies to avoid being blocked? The winning approach is often risk-based routing: send each request through the proxy type that best matches the target's risk level. A common pattern is:

- Start broad with fast datacenter proxies. Use them for cheap, open scraping tasks, e.g., crawling public category pages, fetching sitemaps, or doing SEO rank checks on non-sensitive sites. This maximizes throughput and minimizes cost.

- Switch to residential or ISP proxies from reputable providers. Some providers, including Proxy-Cheap, offer ISP-based IPs suitable for more sensitive requests. When approaching login forms, checkout pages, or sites known to have strict bot protection, switch to a high-trust IP. These proxies give your session a real-user identity, allowing you to bypass blocks that would stop datacenter IPs. For example, many e-commerce pipelines use datacenter proxies to gather product listings, then “hand off” to static residential proxies for price checks or order placements.

- Tune the mix continuously. Monitor success rates in real-time. If your datacenter IPs start getting CAPTCHA on a given endpoint, automatically shift those requests to the residential pool. If residential IPs are sitting idle, it’s fine. Use them sparingly, only for the “hard” parts. Over time, this adaptive strategy keeps costs down while minimizing blocks.

In essence, datacenter proxies are the workhorses for volume and speed, while residential/ISP proxies are the safety net for protected, high-value actions.

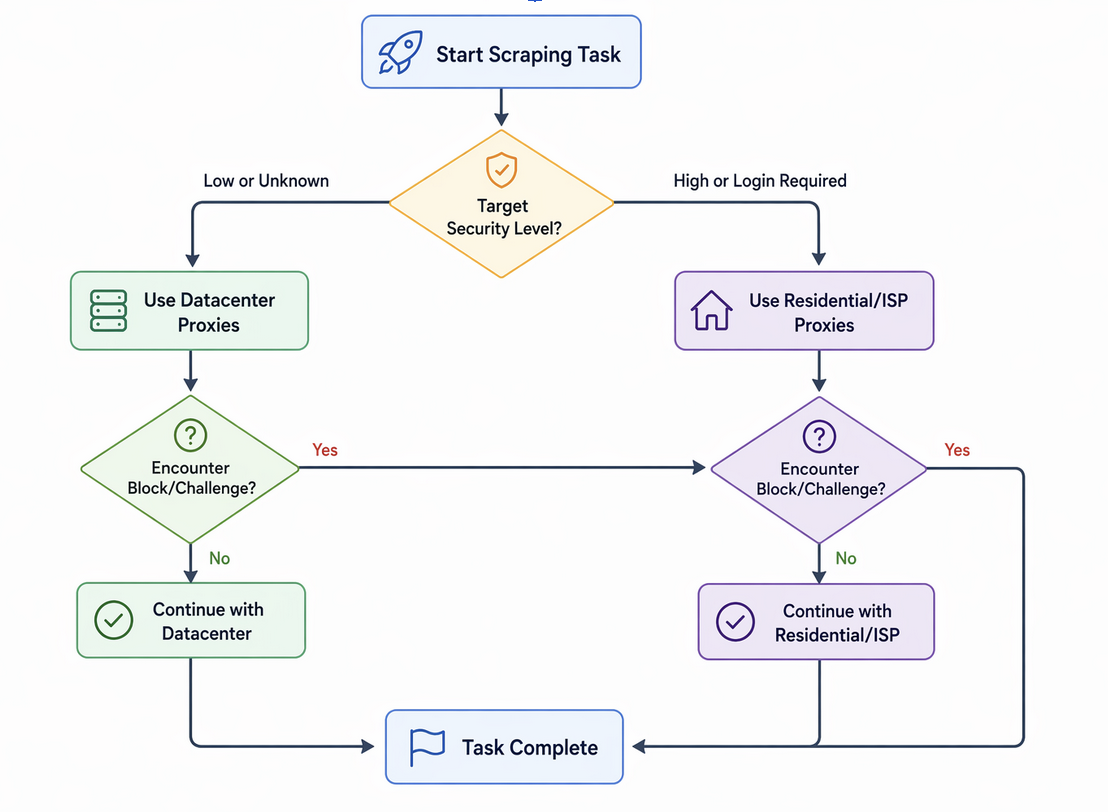

Below is a flowchart illustrating a simple hybrid routing decision process:

In this workflow, non-sensitive requests default to fast datacenter proxies, but any time a block or stricter endpoint is detected, traffic is rerouted to the trusted residential pool. (In practice, your scraper would dynamically evaluate target pages and trigger the switch based on HTTP status codes or content analysis.)

Common Mistakes That Destroy IP Reputation

Overloading Single IPs

Sending too many requests from one IP in a short time is a sure way to trigger rate limits. Even a clean residential IP can rapidly lose trust if it spikes with suspicious volume. Real users browse at a human pace; hundreds of refreshes in a few minutes will get noticed. Distributing requests across many IPs and inserting random delays are essential to avoid triggering per-IP limits or anomaly alerts.

Reusing “Dirty” IP Pools

Cheap or free proxy pools often recycle addresses that were abused by others. If your proxy was used before for spam or scraping, it may already be on various blocklists. Simply rotating through a known-bad IP pool won’t help; the block logic will catch up to you. Always procure IPs from reputable providers that regularly refresh their inventory and monitor blocklist status. For critical sessions, use static ISP-assigned IPs with guaranteed uptime instead of ephemeral shared proxies.

Ignoring Browser and Device Signals

Proxies only mask the IP layer. If your headless browser or HTTP client has inconsistent or missing fingerprints, the IP’s trust won’t save you. For example, if your client claims to be Chrome on Windows but its JavaScript signatures or HTTP/2 handshake don’t match any real browser profile, anti-bot systems will penalize the session. These systems are designed to detect even minor mismatches in TLS parameters, header ordering, or JavaScript API support. Ensure your scraper’s browser automation (or stealth browser) mimics a real device consistently. That means up-to-date browser versions, realistic screen resolutions, consistent timezone/cookies, and even “normal” TLS fingerprints.

Geographic Jumping

Abrupt changes in location raise red flags. For example, if you log into a U.S. site with a New York IP and then immediately use an IP from Germany, security systems will suspect an account hijack. Many sites track login history, and switching geos can trigger verification challenges. When conducting a single session or account, stick to one locale or use proxies that are actually from that region to maintain credibility.

Acting Too Robotically

High-speed, perfectly paced requests are themselves a signal. Advanced bot detectors analyze behavioral patterns on the page (mouse movement, scroll timing, click intervals). Humans never click in perfect 500ms increments or navigate pages without hesitation. If your scraper snaps through actions with millisecond consistency, it stands out. These small variations help keep your IP reputation intact because they mimic human imperfection.

Best Use Cases for Hybrid Proxy Strategies

Hybrid proxy setups shine in several industries that balance data needs with detection risk. Consider these examples:

E-commerce Price Monitoring

A retailer price scraper might use datacenter proxies to gather broad category and list pages at high speed. Once it identifies specific product or checkout pages (which often have stricter bot protections), it would switch to a residential or ISP proxy to fetch pricing and inventory. This ensures continuous coverage without blowing up the budget on residential bandwidth.

Travel Fare Aggregation

Price comparison services and OTA websites fall into a similar pattern. Pulling flight or hotel listings across many sources is feasible with datacenter proxies. But when confirming actual booking details or logging into travel APIs, firms often use ISP proxies to appear as genuine local customers. Many airlines and hotel chains heavily scrutinize datacenter IP ranges for login and checkout flows. In practice, aggregators reserve their residential IPs for the final fare check or seat selection steps to avoid being locked out by anti-bot checks.

Ad Verification and SEO Monitoring

Agencies verifying how ads or search results appear in different regions use residential IPs to mimic actual users. For example, confirming localized ad placements or search rankings often requires the credibility of ISP-assigned addresses. By contrast, crawling generic SEO data (keywords, backlinks, content indexing) can often leverage datacenter proxies. Many firms adopt an ISP and datacenter mix, using datacenter IPs for large-scale crawling and residential proxies for more sensitive, localized checks.

Social Media and Multi-Account Management

Platforms like Facebook, Instagram, or Twitter use aggressive bot defenses. Teams handling large numbers of social media accounts typically use residential or mobile proxies for account logins, because they must appear as normal users from various locations. Datacenter proxies rarely work for such tasks because shared hosting IPs are often flagged by platform security. ISP or static proxies are especially useful for maintaining session continuity.

Brand Protection and Scraper Tools

Companies scraping high-security sites, such as ticketing or inventory platforms, often rely on residential or ISP proxies for endpoints involving login or payment. Datacenter proxies are fine for public data, such as prices or event information, but once stronger anti-scraping protections are encountered, higher-trust IPs are required. These tools often switch proxies mid-flow as data sensitivity increases.

Future Trends: AI, Device Identity, and the Decline of Sole IP Trust

The proxy arms race continues to evolve. Recent research shows that heavy reliance on residential proxies is weakening traditional IP reputation models. For example, GreyNoise found that 39% of attacker traffic originated from home network IPs, likely through residential proxy services. Even more concerning, 78% of those residential IP-based attacks were invisible to existing reputation feeds.

As attackers increasingly use rapidly rotating, short-lived residential IPs, static IP blocklists and ASN-based reputation signals are becoming less effective. This shift is driving greater reliance on behavioral analysis, device fingerprinting, and AI-driven detection systems.

Actionable Checklist for Implementers

- Assess target defenses: Before large crawls, analyze each site’s defenses. If it has aggressive bot protections or login steps, plan to use residential or ISP proxies. Otherwise, use faster datacenter IPs.

- Prioritize fresh IPs: Use reputable proxy pools and rotate addresses frequently. Avoid providers that resell blocked IPs or lack transparency. Consider static residential or ISP proxies for critical sessions.

- Match browser fingerprints: Align headers, JavaScript execution, and TLS client hello with a genuine browser and device profile. Maintain consistent cookies and session tokens, and incorporate mouse movements or scrolls where applicable.

- Control request speed: Do not blast pages at full speed. Insert randomized delays between clicks and navigation to simulate human reaction times. Keep request volumes per IP within typical user limits.

- Maintain geographic consistency: Stick to IPs that align with the user’s expected location. Avoid unrealistic geographic jumps within a single session. Use geo-targeted residential proxies when needed.

- Handle blocks dynamically: Implement logic to detect when a proxy triggers a block (e.g., status codes, CAPTCHAs, or content checks). Automatically switch to a higher-trust proxy or fallback method in those cases.

- Monitor performance and cost: Track success rates and costs for each proxy type. If datacenter proxies begin to fail frequently, increase the use of ISP proxies for those paths. If residential proxies remain underused, scale back to control costs.

- Use unified proxy management tools: Where available, use APIs that support mixed proxy pools (datacenter, ISP, residential) to simplify switching. Consider anti-detection tools, such as stealth browsers or browser rotation solutions, to maintain fingerprint consistency.

Conclusion

Web anti-bot systems continue to evolve beyond simple IP-based detection, making it increasingly important to balance performance with trust. While datacenter proxies offer speed and scale, residential and ISP proxies provide the credibility needed for sensitive interactions.

A hybrid approach, combined with consistent behavioral signals and proper IP management, allows organizations to maintain high success rates without unnecessary cost. As detection systems shift toward AI-driven analysis and device fingerprinting, relying on IPs alone is no longer sufficient.

Ultimately, effective proxy usage is not about selecting a single solution, but applying the right strategy at the right time while ensuring traffic appears natural, consistent, and trustworthy.

Disclaimer

This article is provided for informational and educational purposes only. It does not promote or encourage bypassing security measures, violating terms of service, or engaging in unauthorized data collection activities.

Techniques related to proxy usage, automation, and IP management should be applied responsibly and in compliance with applicable laws, platform policies, and ethical guidelines. Readers are responsible for ensuring their use of these technologies aligns with legal and contractual requirements.

References to tools, proxies, or infrastructure are intended to explain general concepts and industry practices, not to endorse or facilitate misuse.

Featured Image generated by ChatGPT.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment